How to Mitigate Adverse Impact

How to stop adverse impact manifesting during your recruitment process to support inclusive and legal hiring.

Written by

VidCruiter Editorial TeamReviewed by

VidCruiter Editorial TeamLast Modified

Feb 3, 2026

TL;DR

The use of Artificial Intelligence (AI) in hiring is growing in prevalence. But talent acquisition professionals remain divided on how to implement AI tools in a principled way — or whether ethical AI hiring is even possible.

A recent Mercer survey of people who lead in-house recruitment found 40% were happy to use AI to source and engage talent to fill their pipelines, while 42% said they don’t use it or wouldn’t consider using AI during hiring. That makes sense given AI bias concerns are high, and because organizational compliance and brand reputations are on the line.

40% were happy to use AI to source and engage talent to fill their pipelines

42% said they don’t use it or wouldn’t consider using AI during hiring

Adopting AI poses both a technical challenge and a moral dilemma. And the two go hand-in-hand, because understanding the capabilities and limitations of AI tools is the cornerstone of building an ethical AI hiring practice. Discover how to conduct an effective AI hiring tools evaluation to get a head-start.

HR budgets are stretched. It’s easy to see why the lure of saving time and cutting costs holds appeal for busy recruiters. The Academy to Innovate HR found 49% of HR professionals use AI in recruitment, but it was largely driven by vendors, such as AI features within recruitment platforms.

Discerning HR leaders are asking themselves: to what extent do the predictive models used in AI recruitment tools impact their organization’s commitment to building trust, facilitating human connection, and practicing inclusive hiring?

Rather than avoiding AI entirely, HR leaders can help mitigate organizational risks by ensuring they’re across these key ethical issues:

One of the most pressing ethical concerns facing HR is the unfair treatment of candidates (or employees) through algorithmic bias, which is introduced through poor training data or the design of the predictive model used in AI tools.

For example, studies of facial recognition technology have found it’s most accurate in identifying white male faces. It’s significantly more error-prone when it comes to recognising faces of color, especially dark-skinned females (MIT Media Lab). That’s because the systems were trained using mostly images of white men.

AI-led decisions also create a situation where proxy discrimination can occur. Recently, an unsuccessful job applicant sued Sirius XM Radio, alleging its AI-powered Applicant Tracking System assigned scores based on data points that proxy for race, such as home zip code, which led to biases that resulted in his rejection for multiple positions.

Federal Anti-Discrimination Laws Apply to AI Tools

If unproven predictive algorithms are used to directly assess candidates, organizations can’t be certain that AI bias — based on protected characteristics like race, sex, age, or disability — isn’t disadvantageous to some applicants. In addition to being unethical that could put an organization in breach of federal laws enforced by the U.S. Equal Employment Opportunity Commission (EEOC).

The so-called ‘black box’ problem — being largely in the dark about the quality and accuracy of the code and data underpinning an AI tool’s outputs — can make errors and biases hard to uncover.

Not being able to easily interpret AI outputs also makes hiring decisions made by AI less defensible. That makes it harder to communicate your process to candidates, or provide strong evidence if your HR team is hit with claims of negligence or discrimination.

Transparency and explainability will only become more important with the growing implementation of responsible AI recruitment legislation. Multiple US states now have, or are considering laws, that require employers to track and share how and when they use AI in hiring, and how they mitigate its risks.

Blind Trust in Third-Party AI Tools is Risky

More than half (55%) of AI-related failures or ethical lapses that hurt organizations arise from third-party AI tools, according to consulting firm BCG. Organizations in highly regulated industries were more likely to experience impacts from AI failures, in the form of reputational damage, financial losses, and regulatory compliance challenges.

Over 80% of Americans are concerned about how companies use the data they collect about them (Pew Research).

Around the same amount of people believe that companies’ use of AI will lead to their personal information being used in ways they wouldn’t be comfortable with.

To be equipped to comply with a complex web of international and local data privacy and security laws, and maintain an upstanding employer brand, employers need to get clarity on:

How humans feel during your organization’s recruitment process is central to the ethics of AI in hiring. Heavy use of AI can make candidates feel disrespected, especially if an organization hasn’t proactively explained AI’s role in the process.

Automated screening tools like AI-led interviews — which may exclude applicants without human review — are particularly ethically dangerous because they dehumanize the recruitment process. Job seekers and recruiters may both suffer from a lack of agency, as having little control over the choices that shape an outcome can be disillusioning.

AI Lacks Empathy, But Isn’t Inherently Unethical

A 2022 study published in the ‘AI and Ethics’ journal that took a human rights perspective on AI recruiting concluded that ethical risks “can be exacerbated by unreflective use of AI recruiting tools.” Fit-for-purpose AI adoption is key.

The authors highlight how AI can’t display empathy, interpret contexts or fully value individuals, and therefore should only augment human-led recruiting. “AI should not fully substitute humans in the recruiting process because the personal interaction between recruiters and applicants is important to counteract the process’s dehumanization.”

While plenty of individual recruiters dabble with AI, a 2024 survey of almost 500 HR leaders found just 14% had actually incorporated AI technology into their organization’s recruitment stack (Mercer). A key reason cited was poor understanding about the tools and their efficacy.

Leading organizations are committed to responsible AI adoption and governance. Part of that commitment is properly evaluating third-party tools, across multiple criteria, before they’re systematically introduced.

The core evaluation criteria to cover when exploring AI hiring tools include:

Since AI is incapable of matching the wisdom, decency and compassion of a real human, ethical AI requires human decision-makers being involved. AI systems designed with an understanding of the importance of human-in-the-loop are more likely to provide opportunities for people to consider insights and fix incorrect outputs, to avoid mistakes and to help a system improve over time.

AI Assisted Decision-Making is the Ethical Choice

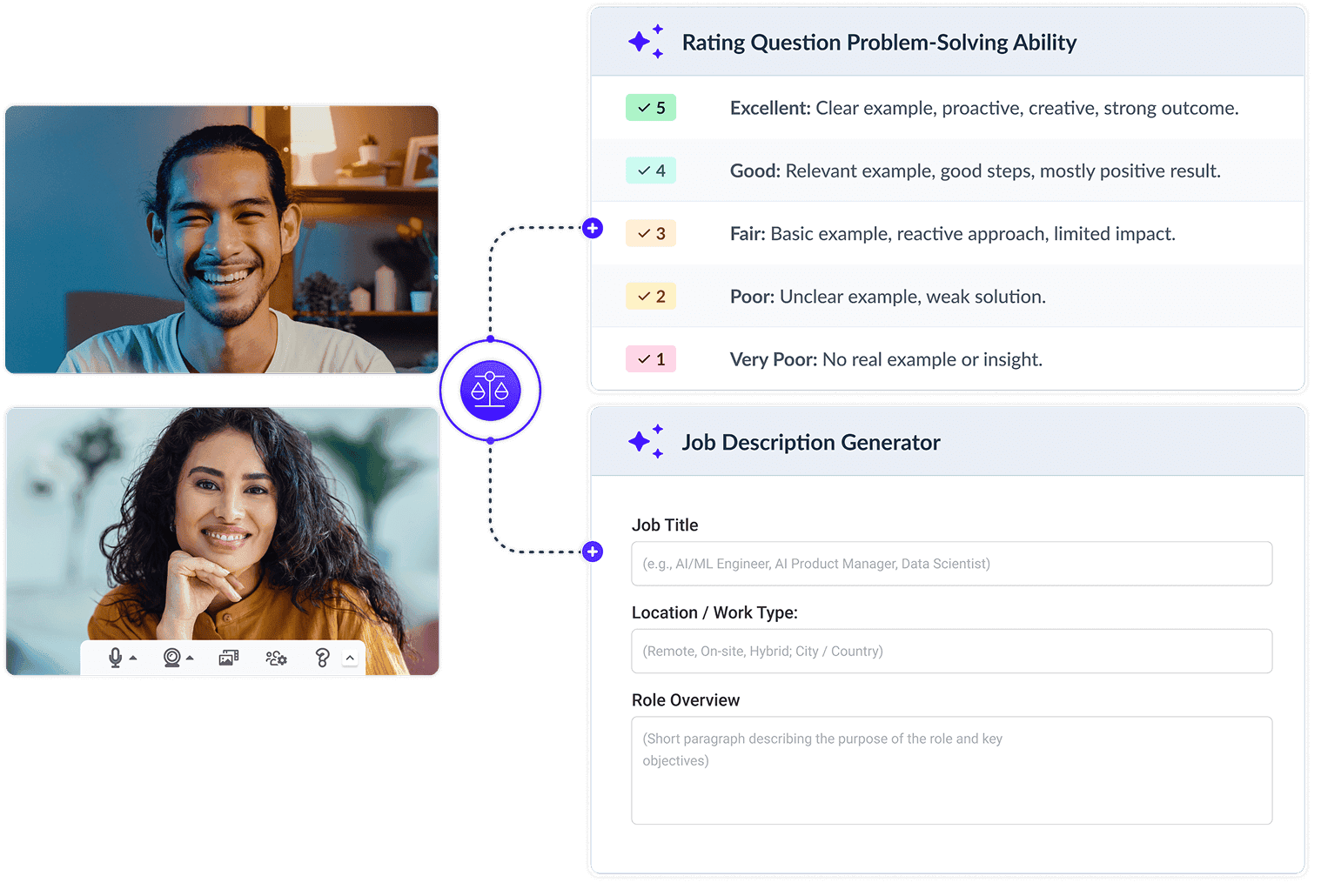

Based on its documented commitment to ethical AI, VidCruiter’s approach to AI tools emphasizes human oversight — AI-assisted decision-making, rather than automated decision-making. For example, using AI for interview note-taking rather than candidate scoring. This approach avoids ethical risks, reduces administrative and data processing burdens, and allows recruiters to focus on meaningful candidate interactions.

Organizations should request information about how a tool’s training data is sourced and updated, the cadence and type of monitoring and testing that vendors perform on their AI models/features, and evidence of regular bias audits. Where available, also take into account independent validations, reviews or studies on a tool’s effectiveness and fairness.

Has the vendor made it clear what the AI analyzes or actually does in the background to produce its outputs? Organizations should be wary of tools enabling AI-led decisions that cannot provide a robust and easy-to-follow explanation of its technical workings and reasoning process. Transparent and explainable tools also help recruiters communicate well with candidates about how AI influences hiring decisions.

What to Ask Vendors About Responsible AI Recruitment?

Workplace law firm Fisher Phillips provides some example questions you can ask vendors as you explore various AI recruiting tools, including:

Look for precise and comprehensive data collection and usage policies that instill confidence that candidate and organizational data will be held and processed securely, and used only for its intended purposes. Check that the AI system is compatible with relevant data privacy and security legislation. Obtaining candidate consent and giving people control over their personal data is best practice, and central to a number of privacy laws, such as the EU’s General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA).

It’s a myth that all AI tools on the market have been carefully tested and are guaranteed to measure or predict what they say they measure or predict. If a tool makes bold claims about an AI’s predictive powers, but how its algorithm works is hidden or unknowable, it’s essential to obtain prediction accuracy metrics. Organizations should also seek clarity on the construct validity of different tools — what is the evidence that they measure a specific trait or quality?

Employers will face the consequences of any mistakes, deceptive conduct or compliance breaches resulting from the use of AI tools. Therefore, in addition to assessing the functionality of specific tools, it is vital to have trust in the vendor — including their ethical AI ethos. Has the vendor stated a commitment to developing and refining fair and fit-for-purpose tools through ongoing testing and monitoring? Do they provide clear service level agreements and responsive support?

Who’s Keeping Your Vendor Accountable?

Prioritize vendors that have considered how to effectively govern its ethical AI tools throughout the conception, design and development stages. For example, VidCruiter established an AI Ethics Committee with a board-like structure which includes key staff and is in the process of considering external expertise.

Once adopted, AI tools should be evaluated regularly. Do this by requesting and reviewing bias audit documentation from the vendor, but also carefully review your own hiring outcomes statistics and interview scoring records for adverse impacts.

Useful methods for auditing AI recruitment outcomes for bias could include:

Best Practices Checklist For Ethical Adoption of AI Tools for Hiring

Consider how AI use aligns with, or conflicts with your corporate values, the risk-benefit equation, and potential impacts on staff and candidates.

Clarify your AI recruitment policies and the specific tasks where AI can safely add value before you explore tools, to help narrow down your search.

Research tools and develop a short-list to assess against your criteria for accuracy, fairness, compliance, explainability, usability, data protection.

Create a panel representative of diverse backgrounds and demographics to review assessment data and guide software selection.

Design AI-assisted workflows that support best practices like structured interviews, human final decision-making authority, and record-keeping.

Alongside your communications and training on how to use the tool itself, provide guidance to hiring teams on bias, AI’s limitations and its ethical use.

Redesign your employer branding content and recruitment templates to include clear explanations for candidates about AI use.

Regularly audit AI tools’ performance, vendor’s terms, and use internal data to monitor hiring outcomes for fairness and effectiveness.

Ethical AI hiring isn’t an oxymoron. Despite lingering questions over the validity and fairness of AI in hiring, organizations that thoughtfully choose and apply AI tools can benefit from faster and fairer recruitment processes.

HR leaders that care about doing the right thing, yet want a competitive advantage from AI, should evaluate their current and prospective AI recruitment tools against the guidelines provided here before progressing from experimentation to full-scale implementation.

AI hiring is legal. But organizations need to be aware that they remain liable for any breaches of the law that arise from using AI outputs — such as charges brought by the EEOC for employment discrimination. Organizations are accountable even if the issue occurs due to errors or biases introduced by third-party recruiting software.

An AI hiring tool can introduce biases when the data it’s trained on is inaccurate and the model is ineffective. Using transparent, explainable AI systems created by vendors committed to AI ethics is smart. For HR leaders already using third-party AI tools, the best way to determine if the system’s outputs are biased is to audit your recruitment process and outcomes.

Yes, candidates should be told about how AI is used in your recruitment process, particularly when they will be directly interacting with AI-powered tools. Open and honest communication about the role of AI promotes trust with candidates, and is also wise given the rise of legislation mandating greater employer transparency around AI recruitment.

In many regions, using AI interview scheduling and documentation is permitted when privacy, consent, and data protection requirements are met. Candidates should be informed when interviews are recorded or transcribed, and organizations must handle interview data securely and in compliance with applicable employment and data protection regulations.

Modernize your hiring process with expert insights and advice.