Why You Need an AI Framework for Recruiting

A guide to Ethical AI use in hiring. How to approach decision-making, privacy protection, policy compliance, and more.

Artificial intelligence (AI) in recruiting is the act of using AI tools during the recruitment and hiring process. AI tools like generative AI, large language models (LLM), and natural language processing (NLP) can perform tasks that typically require human intelligence.

In this guide, we’ll walk you through the role of AI in recruiting today: where it adds value, where it requires oversight, and talk about best practices for integrating it responsibly into your talent acquisition process.

AI recruiting involves using artificial intelligence and machine learning systems to enhance the recruitment process. The acronym AI refers to a variety of technologies, including generative AI, machine learning algorithms, NLP, LLM, text-to-image and text-to-video models, speech recognition/generation, and predictive analytics.

This AI technology can learn from data, recognize patterns, make decisions, and solve problems. Used as an HR tool, AI can help automate repetitive tasks and scale and maintain candidate communication.

Currently, recruiters use AI for various tasks, such as developing job descriptions, sourcing talent, creating and scoring assessments, screening applicants, and communicating with candidates. Some AI tools pose more significant risks than others, which is why it’s essential to establish a framework for recruiting before jumping into AI recruiting.

Important consideration for using AI in recruiting

The European Union (EU) and the Canadian Government have laws that govern the use of AI tools in hiring because it’s classified as a high-risk activity that may adversely impact protected groups. Employers should exercise caution and due diligence when using AI in recruiting to prevent discrimination, ensure fairness, and maintain legal compliance.

Research has found that more than two-fifths (43%) of HR leaders say their teams are feeling overwhelmed by new responsibilities.

Teams are trying to meet goals and candidate expectations while also being pressured to move faster. Using AI in recruitment is becoming increasingly necessary to prevent burnout and stay competitive.

AI tools automate top-of-funnel tasks like sourcing, screening, and assessments so you can focus on what you do best: building relationships and making confident, human-centered hiring decisions. When used responsibly and paired with interview intelligence, AI tools can even increase the chances of hiring the best fit for the role.

According to a 2023 ISE Student Recruitment Survey, nearly a third (28%) of employers are now using AI as part of their hiring process, a significant increase from 9% in 2022.

A Mercer report found that 55% of human resource leaders in the U.S. use predictive algorithms to support hiring.

Let’s explore the different systems that power AI recruiting tools and how they can support the hiring process.

Generative AI

This technology can create new content, like images, text, or even music, based on patterns it learns from existing data. It can be used to create job descriptions, emails, or even chatbot responses.

Machine Learning (ML) algorithms

These programs can learn from data, recognize patterns, and make decisions without being explicitly programmed for every scenario. For example, it can sift through large volumes of résumés and applications to identify top candidates based on predefined criteria. However, exercise caution when using AI for screening, as it could potentially filter out qualified applicants or perpetuate implicit biases.

Natural Language Processing (NLP)

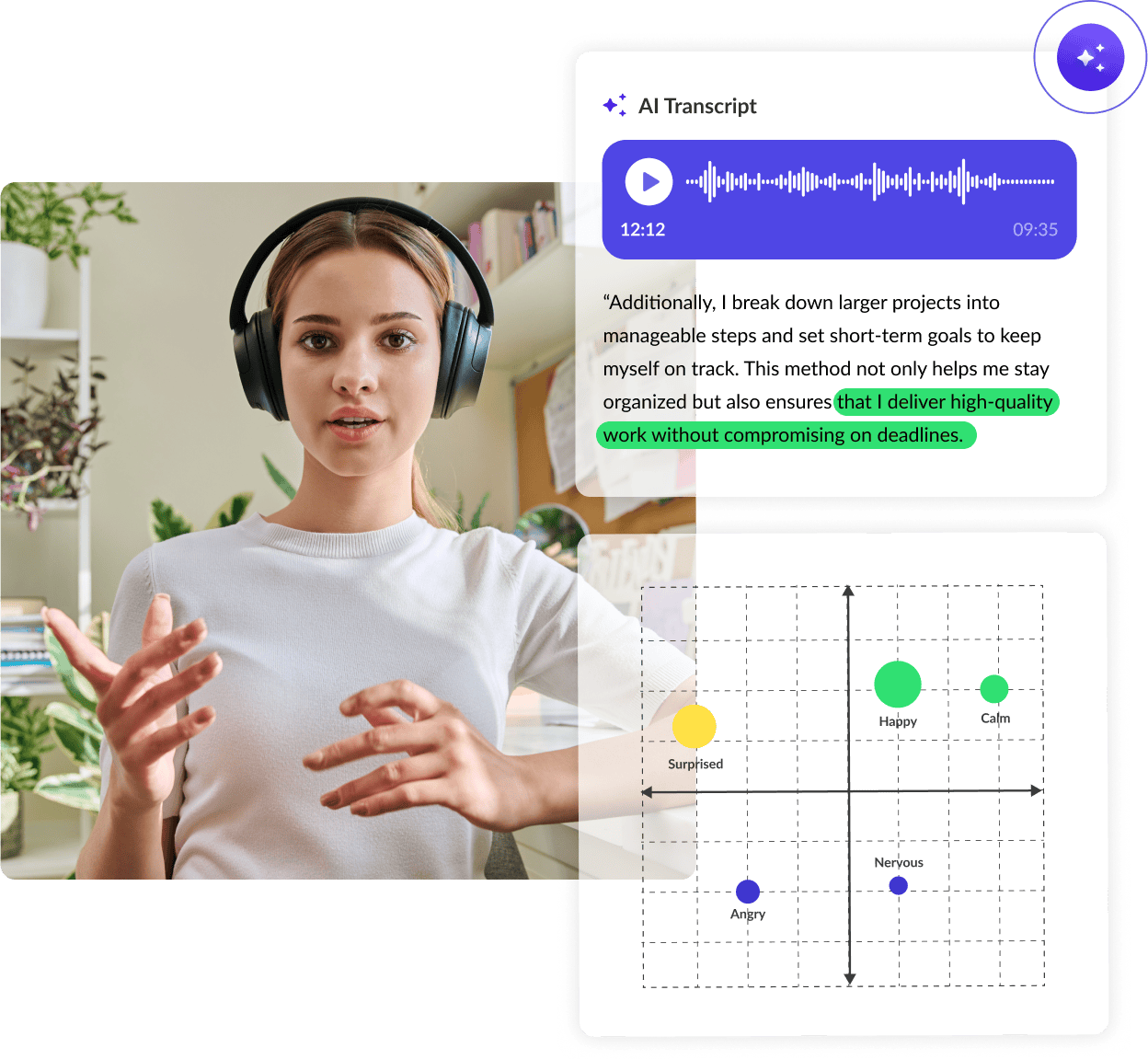

NLP helps computers understand text, speech, and even emotions in language. You can use it to analyze resumes, cover letters, and candidate communications to extract relevant insights but be careful, as the output from these systems can be unreliable or inaccurate.

Large Language Models (LLM)

LLM systems are trained on vast amounts of text data to understand context and generate coherent responses. Use them to craft compelling job postings or automate routine communication tasks, like scheduling interviews and sending follow-up emails.

Text-to-Image and Text-to-Video Models

These AI systems use algorithms to turn text descriptions into visual content. It can be used to make visually appealing job advertisements, company culture videos, or virtual workplace tours. It should be noted that many regulatory bodies closely monitor AI use in recruitment marketing materials.

Speech Recognition and Generation

Speech recognition technology allows computers to understand and transcribe spoken language. Speech generation, on the other hand, enables computers to produce human-like speech from text, which can be used to personalize candidate communications.

Predictive Analytics

This involves using data, statistical algorithms, and machine learning techniques to predict future outcomes. It helps businesses make informed decisions by forecasting trends and behaviors based on historical data, like the likelihood of candidate success and retention.

Why more teams are using generative AI in recruitment

Generative AI adoption in recruitment is accelerating. LinkedIn’s Future of Recruiting 2024 report found that the number of organizations integrating or testing Gen AI tools rose from 27% to 37% in just one year.

So why the surge? Generative AI enables busy small and mid-sized recruiting teams to produce polished, personalized outputs (like candidate emails or job descriptions) that used to require a full communications or HR team.

A 2025 Resume Builder survey found that 51% of companies already use AI in recruitment, with that number projected to rise to 68% by year’s end. Adoption is growing for good reason: AI technology is helping teams work more efficiently and make better hiring decisions.

Here’s how AI tools are improving the recruitment process from end to end.

The quality of an interview can vary widely from one interviewer to the next, especially across multiple interviewers or locations. AI interviews reduce that variability by standardizing how interviews are conducted, scored, and reviewed.

When paired with structured interview questions and scoring rubrics, AI helps your team stay consistent, minimize bias, and ensure every candidate is treated the same.

How VidCruiter uses AI in video interviewing

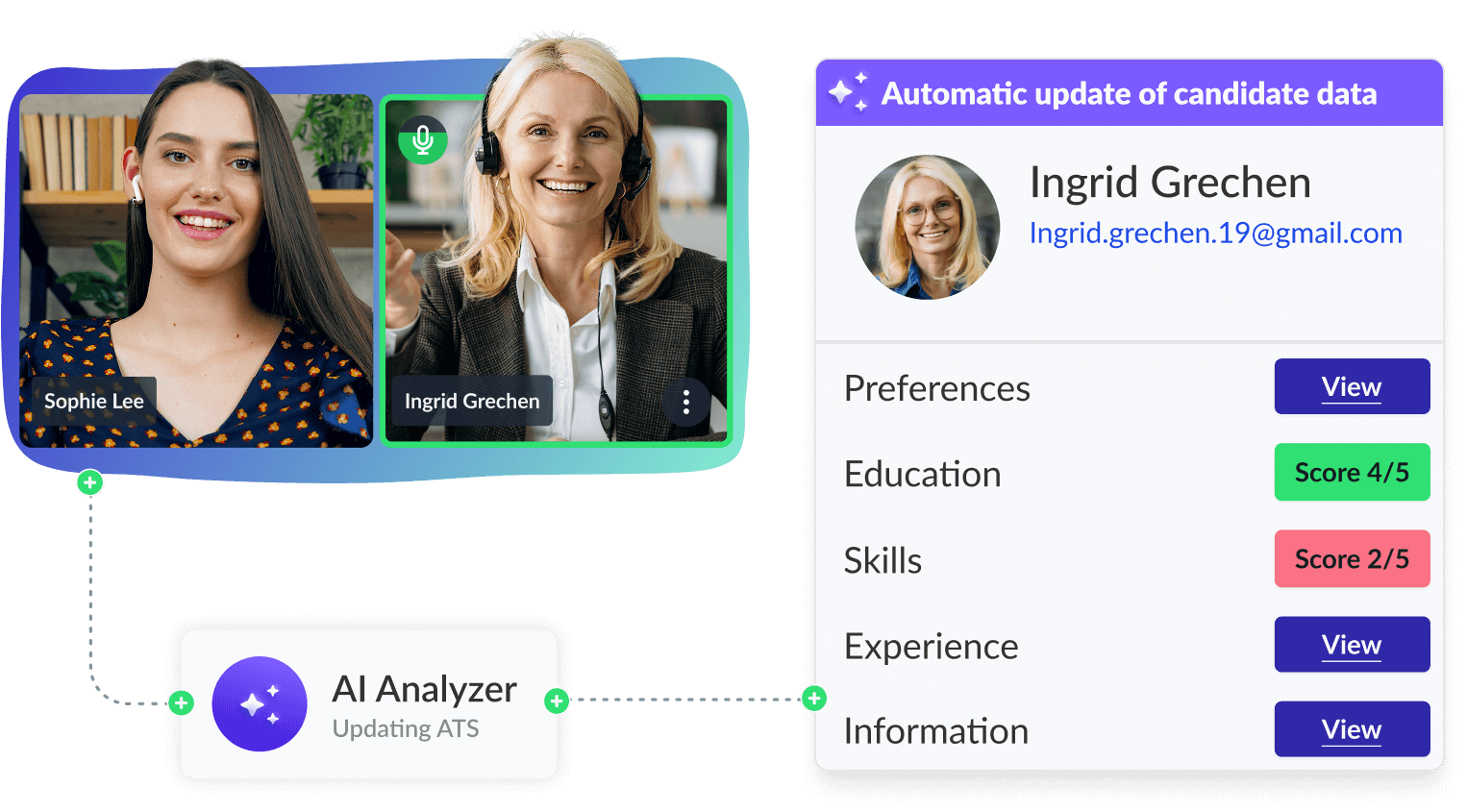

VidCruiter's pre-recorded interview platform generates AI interview notes and post-interview summaries so you can focus on the candidate, not your keyboard. You retain full control and final decision-making, while the AI supports consistency and speed.

According to a 2025 global ManpowerGroup report, 74% of employers are struggling to find skilled talent. AI recruiting can make sourcing candidates much easier, as it can automatically rank potential hires (applicants and passive talent) based on how closely their qualifications match those of successful candidates, considering factors like education, skills, achievements, and interests in their resumes.

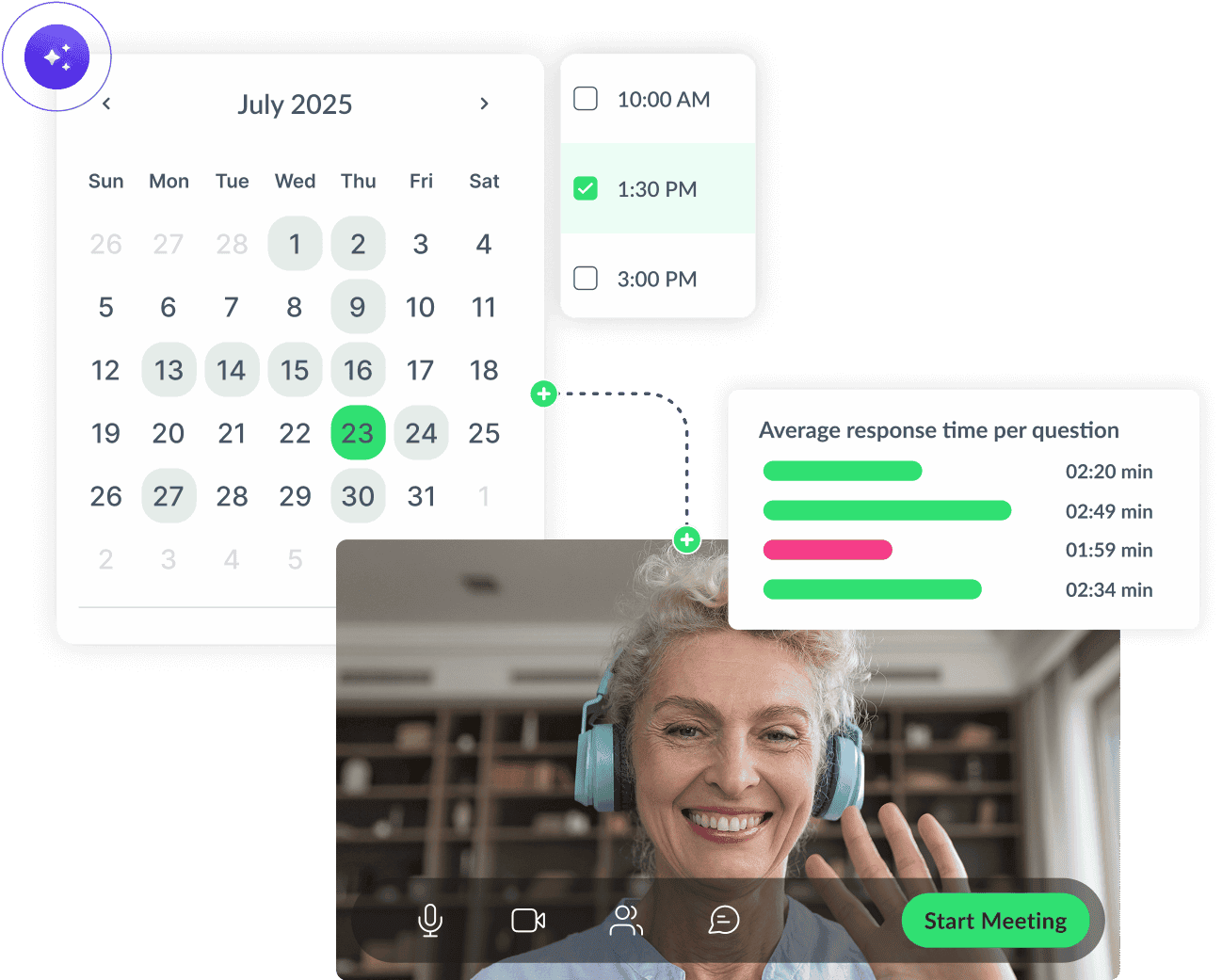

AI can handle many repetitive tasks that can take up much of a recruiter’s time, like interview scheduling. While some AI automations will still need oversight, they will save your organization money and buy back time for strategic work like engaging candidates or making decisions.

AI can automate and streamline many time-consuming tasks, which can speed up the hiring process and reduce your time to hire. Plus, since AI can process more data in less time, it can provide more detailed information on candidates, helping you make better decisions and fill roles faster.

Some organizations use AI to screen job candidates, which can significantly speed up hiring processes, but also carries certain risks. The EEOC has indicated that employers could face liability if their AI tools, including software and algorithms, lead to unjust hiring outcomes.

Specifically, if these technologies fail to adequately address requests for reasonable accommodations in the hiring process, or if they intentionally or unintentionally exclude applicants with disabilities who are capable of performing the job with reasonable accommodations.

While it may seem counterintuitive, you can use AI to send tailored messages based on the candidate’s interests, skills, and stage in the hiring process. AI can also analyze data from previous interactions and candidate profiles to craft messages that resonate with each individual to create a better candidate experience.

When used correctly, AI can potentially help mitigate hiring biases by spotting opportunities for organizations to foster fairer and more inclusive hiring practices.

An AI recruiting tool could review job postings and candidate communications for biased or exclusive language that could discourage particular groups from applying and suggest more inclusive and impactful alternatives.

It could also uncover candidates that recruiters may overlook due to health issues, employment gaps, lack of formal education, or non-traditional backgrounds. This approach widens the candidate pool and potentially increases workplace diversity.

Could AI close the gender gap in STEM?

A 2023 University of Melbourne study found that using AI in recruitment almost doubled the number of women assessed to be among the top 10 percent of performers, which means using it would likely result in companies hiring more women for similar types of tech roles.

AI tools have many potential benefits. Still, if used incorrectly or recklessly, the negative impacts on your organization and society could be severe. For more information, read our deep dive on the risks involved in using AI in HR.

Here are a few of the most serious challenges of AI in recruiting, and our recommendations to help you prevent or overcome these challenges.

Whether you’re using real or synthetic training data for an AI model, it’s nearly impossible to ensure that it’s free of bias, which could result in inaccurate output. Algorithm errors could also lead to AI tools providing incorrect information or basing decisions on irrelevant criteria. And so, these tools might misinterpret data or miss important contexts that human recruiters would catch.

As a result, AI can perpetuate these biases and make discriminatory decisions or choices irrelevant to the role-related criteria.

Hiring Data

Bias begins here if the human-selected data reflects past inequities or unbalanced hiring practices.

Algorithm

The algorithm learns from that data, reinforcing patterns instead of correcting them.

AI output

Without human review, biased results can slip through unchecked.

For example, Amazon shelved plans to use an AI hiring tool in 2018 after it showed prejudice against female applicants. There’s also a risk of discrimination against candidates with disabilities, as AI tools may not adequately assess candidates with speech impediments or physical limitations.

Investigate potential algorithmic biases by regularly auditing your AI-powered recruitment systems (combining internal and third-party audits), and training AI using diverse data sets.

Job descriptions filled with unnecessary criteria can cause AI ranking systems to overlook qualified candidates. AI may down-rank or filter out candidates for reasons that would not impact performance, such as career gaps or lack of a university degree. In other biased instances, AI analyzing video appearances flagged individuals for wearing glasses or headscarves, and vocal analysis tools have shown biases against regional accents.

These mistakes could have serious repercussions as more companies use AI tools in selection processes. For example, a candidate who gets unfairly filtered out based on an arbitrary decision might not just lose out on one job but get shut out from the workforce entirely. AI tools could also expose organizations to legal action if candidates can prove discriminatory or unfair treatment.

Using AI in recruitment is most successful when it supports your team, not replaces it. The table below breaks down where AI can streamline your hiring process and where human oversight is still essential for fairness, accuracy and candidate experience.

The task

What AI does

What the recruiter does

Job description writing

Generates job descriptions and interview scorecards based on inputs like job title, location, and department.

Reviews and refines the output to ensure accuracy and alignment with role expectations, team needs, and DEI goals.

Candidate sourcing

Scans job boards, databases, and internal systems to identify potential candidates based on role requirements.

Oversees AI-driven sourcing results and refines search criteria to improve candidate quality and alignment.

Resume screening

Scans resumes, extracts key qualifications, and ranks candidates based on how well they match the job requirements.

Reviews AI-ranked resumes with a critical eye, validating top picks and watching for overlooked talent.

Candidate matching

Compares candidate profiles to job criteria and predicts role fit using historical hiring data.

Interprets AI-matching insights and makes final decisions based on team dynamics, values, and long-term fit.

Automated communication

Provides updates, answers to FAQs, and helps with interview scheduling.

Personalizes outreach, follows up with top candidates, and adds a human touch back into communication.

AI interviewing

There are many types of AI interviews, but in this instance we are talking about when AI does real-time analysis during live video interviews.

Uses AI interviewing tools to conduct better structured interviews by reviewing AI-generated feedback with human judgment.

While AI tools like chatbots save time, they can also make the hiring process feel less personal for both hiring managers and candidates. As a result, both parties might feel more disconnected from the process.

Additionally, AI’s generic messages may not fully address candidates’ queries or concerns, leading to a potentially frustrating candidate experience. If you have ever called a customer service line and gotten annoyed because you had to rephrase your question multiple times or got stuck in a conversational loop, then you know how painful an inhuman communication experience can be.

Use AI to create structure and speed, but build in early opportunities for human interaction. Aim to include at least one human touchpoint within the first few candidate interactions (for example, a short phone call or a live check-in).

Researchers often describe emerging AI tools as a “black box” due to their lack of transparency. Most users do not understand how AI algorithms work and why they make certain choices and many companies do not want to disclose the tools they use. At the same time, AI software providers do not want to discuss the inner workings of their algorithms due to intellectual property concerns.

Vendors often do not know exactly how their models are making decisions. AI systems can hallucinate facts or base decisions on irrelevant proxies without vendors knowing or being able to control them. These factors lead to a lack of transparency, which means job candidates have limited insight into how companies use AI to evaluate and track them.

Transparency is limited, but accountability isn’t

While you may not be able to explain every algorithmic decision, you can be open with candidates about how you use AI in your hiring process and how it stores their personal information. Let candidates know when AI is involved and offer opt-outs when and where possible.

AI recruitment tools can create long-term cost savings in the form of increased efficiency, but the initial and ongoing investments may pose challenges. Setup expenses typically include purchasing the tool, integrating it into your existing workflow, and training staff to use it effectively.

As for the ongoing commitment, you will need to monitor performance, address emerging biases, and invest in system improvements over time. For organizations without dedicated support, the constant monitoring and time investment can be steep.

Start small by incorporating AI in the places you’ll get the most value and scale strategically. Look for an interview management system, like VidCruiter, that offers flexible pricing models. That way, instead of committing to a fixed cost up front, you can gradually expand your use of AI tools.

While AI companies continue to launch new AI-powered features, a few notable organizations already use AI in their hiring and recruitment processes. It is important to note that future legislation may alter how these organizations operate these tools and processes.

UK army recruiters are using artificial intelligence to speed up hiring. According to Capita, the organization managing the UK Armed Forces recruitment, application documents can be up to 100 pages long and take up to an hour to evaluate manually.

“Face-to-face contact and engagement with serving personnel will always be at the heart of Army recruitment, but there are parts of the process which have become quicker, simpler, and more effective through the use of artificial intelligence technology,” said a Capita spokesperson.

“We are deploying these tools to streamline recruitment, get people into basic training faster, and help prevent candidates from dropping out.”

Despite its past controversies, a 2021 internal Amazon document revealed that the organization has been developing an Automated Applicant Evaluation system to determine which job applicants possess the most potential for success. The software searches for matches between the applicants’ résumés compared to current Amazon workers in the same role. Candidates that the AI tool identifies will then be fast-tracked for interviews.

According to Nathalie Scardino, Salesforce’s interim chief people officer, the company uses AI to review applications and shortlist candidates. “Last year, we got more than 2 million job applications, so it’s helping us identify top talent that we may have missed otherwise,” Scardino said in an interview with Forbes.

The company is also using AI to source talent within the organization by analyzing its current workforce and identifying potential internal candidates who could be a fit for open positions.

Microsoft, along with companies like Amazon, Unilever, and Koch Industries, recently worked with the Center for Industry Self-Regulation (CISR) to publish a set of principles for trustworthy AI in hiring and recruiting.

The principles cover topics like transparency, fairness, non-discrimination, technical robustness, safety, governance, and accountability for AI in recruiting. They also outline protocols for certifying and holding AI vendors accountable, not just the employers using their services.

While the rise of AI recruitment has led to fears of AI tools replacing human talent, it’s more likely that AI will play a supporting role, allowing recruiters to reprioritize certain activities.

Additionally, human oversight is vital for maintaining an ethical AI in the hiring process, so there’s no chance AI recruitment will replace recruiters or HR professionals. But it will change what these jobs look like.

Since AI tools can eliminate many day-to-day repetitive tasks and time-consuming analytical tasks like processing large data sets, the value of the human touch and expertise becomes even more critical.

Recruiters will have more time to focus on tasks that require human attention, like connecting with potential candidates, building relationships, decision-making, and optimizing the hiring experience and candidate journey as a whole. Lean into this shift and build soft skills if you want to lead the future of hiring.

Key skills recruiters will need in 2026 and beyond

Since job seekers can use generative AI to compose and enhance their resumes and cover letters, these written materials will likely become less relevant sources of evaluation. Instead, recruiters may turn to a candidate’s social media presence, LinkedIn profile, interviews, or skill assessments to better understand their role-related skills, knowledge, and abilities.

Are resumes going extinct?

According to a 2024 Canva survey, 71% of respondents say that purely text-based résumés will be obsolete in five years.

Some large organizations test job seekers’ qualities through AI-powered soft skills and personality assessments, which use data-driven behavioral insights to match candidates to open roles. Like with any selection tool, organizations may be held liable for any unjust outcomes attributed to this type of use.

Additionally, recruiters may need to get more creative with their technical skill testing. This may mean leveraging more visual, audio, or video-based mediums, or doing timed assessments that candidates can’t easily complete with AI tools.

As AI technology rapidly advances, more recruitment software companies are incorporating it into their products. Here are a few recent innovations in AI and recruiting.

LinkedIn's new AI-assisted recruiting tool, Recruiter 2024, leverages generative AI to help recruiters craft better search strings to generate stronger candidate lists. Similar to searches with ChatGPT, recruiters can use more conversational language to narrow down their search criteria. The tool will also provide suggestions outside of what recruiters might think they are looking for, broadening their scope of potential candidates.

The FoundIt platform recently unveiled a new outreach feature that leverages generative AI to help recruiters draft and send personalized candidate emails at scale. According to FoundIt, 100 companies have experienced an 80% increase in recruiter productivity, a 450% reduction in recruitment costs, and a 200% increase in candidate response rates.

LinkedIn’s Recruiter 2024 will also have AI-assisted messaging, enabling recruiters to draft highly personalized InMails in one click. According to the platform, recruiters experience more than 40% increase in InMail acceptance rates when they personalize candidate outreach.

Tengai, a Swedish candidate screening software company, recently rolled out an interactive AI avatar recruiting assistant that can pre-screen applicants. It uses Natural Language Understanding (NLU) to create a human-like, two-way conversation. The avatar also mimics human behavior like nodding, blinking, and listening, and it can understand different accents.

This kind of technology may help save you time, but you need to be careful if you are thinking about using AI platforms for hiring decisions.

There is no denying that AI is a game-changer for recruiting. From writing job descriptions to automating tasks to maintaining close candidate communications, AI can complete tasks that used to take recruiters hours in mere minutes or seconds.

These advancements can be equally exciting and nerve-wracking for recruiters, but remember to think of AI as a recruiting assistant, not a replacement. It’s a tool designed to make your life easier, free up more of your time for critical engagement and decision-making tasks, and get the right people in the right roles in a much more efficient, organized, and personal way.

Ready to see AI in action?

Explore how VidCruiter thoughtfully integrates AI interview notes and AI-generated summaries into the video interviewing process, so you can fully focus on your candidates. Request your demo now.

Depending on the stage of the hiring process, AI can be used to:

AI is augmenting, not replacing, roles related to hiring. Using AI in HR can make professionals and recruiters more efficient and productive by eliminating time-consuming and repetitive tasks like writing job descriptions, candidate outreach, and data analysis. However, striking a balance between AI efficiency and human judgment is key.

AI recruitment tools are highly effective at filtering large volumes of data and identifying candidates who match the required qualifications. However, the accuracy of them depends on the quality of data fed into the system and design of algorithms.

To keep your AI recruitment tools effective and fair, a team that includes HR, compliance (this can be a consultant), and IT should review them at least quarterly. This team should assess performance metrics, check for emerging bias, and adjust algorithms as needed.

Responsible AI should be transparent, inclusive, and compliant with employment laws and regulations while prioritizing data privacy and security. More specifically, to use AI responsibly is to use it in a supportive role.

We recommend using it to generate content, scale candidate communications, drive recruitment automation, and analyze processes, but only if a human is reviewing the output to ensure accuracy. We caution against using it as a primary decision-making tool (i.e., screening, filtering, or evaluating candidates) because of the potential harm caused by inaccuracy.

Modernize your hiring process with expert insights and advice.